New years eve 2019 is only a few weeks away. The end of the decade. The last day of the 2010, then the first of the 2020s.

Or is it?

This post is about calendars and how we assign numbers to years. I’ll explain why, if you look closely at them, years like 120 BC, AD 14, and 2019, aren’t as straightforward as you might think. Then I’ll describe the off-by-one error that has led to all this confusion rippling down through the centuries: the year that should be year 0 but isn’t. Finally I’ll suggest a different way of counting years that makes most of this confusion go away.

Let’s start off with a classic exchange from Seinfeld (S8E20, “The Millennium”):

Jerry: By the way, Newman, I’m just curious, when you booked the hotel, did you book it for the millennium new year?

Newman: As a matter of fact, I did.

Jerry: Oh that’s interesting, because, as everyone knows since there was no year zero, the millennium doesn’t begin until 2001, which would make your party one year late, and thus, quite lame. Oooooh.

Newman: *squawnk*

Wait, what? I’m old enough to remember that the big millennium new year was on the evening of December 31, 1999. Wasn’t it? I’m pretty sure it was.

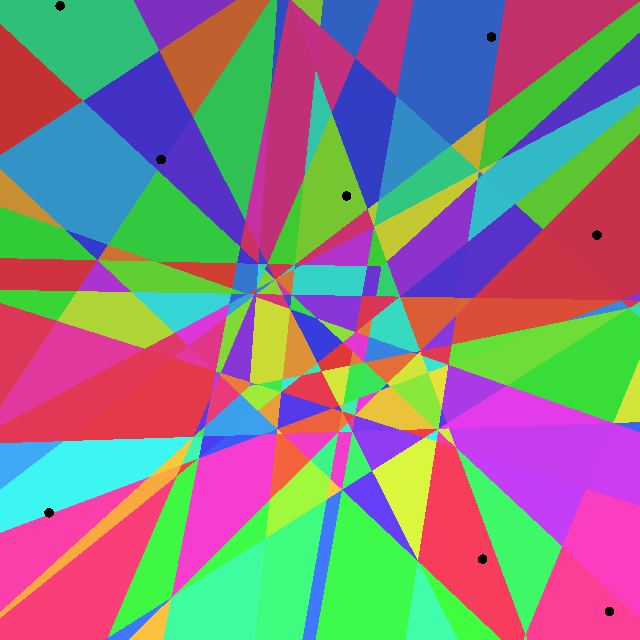

What’s in a millennium?

Let’s say Jerry is right – and in fact he is – that this millennium only started in 2001. What does that make the year 2000? Which century is it in? Is it in the twentieth or twenty-first century? The twentieth century is the 1900s right so surely it’s not in that? If the 1900s means anything it must be years that start with 19. So it must be the twenty-first century? But then that would mean that on new years eve of 1999 we went from the twentieth to the twenty-first century. But since we’re assuming Jerry is right we then had to wait until 2001 to go from the second to the third millennium. That sounds all wrong though, centuries and millenniums must match up, and decades too. Speaking of that, which decade is 2000 in? Surely it’s not in the 1990’s right, surely. Right?

Okay so you may suspect that I’m deliberately making this sound more confusing that it is, and I am; sorry about that. It does actually all make sense – sort of – but is still a little unexpected.

The core question is: what is a millennium, a century, a decade? The answer is that any period of 1000 years is a millennium, any period of 100 years is a century and any period of 10 years is a decade. So the years 1990-1999 is a decade but so is 1945-1954. Similarly the years 1000-1999 is a millennium but so is 454-1453. None of these terms have anything to do with where on the time scale they occur, they’re just periods of a particular number of years.

This means that the first millennium is the first sequence of 1000 years, wherever they fall on the timeline. As Jerry says, because there is no year 0 the first year is AD 1. A thousand years later is AD 1000 so that’s the first millennium: AD 1 up to and including AD 1000. The first year of the second millennium is AD 1001 and the last is AD 2000. So we did indeed only enter the new millennium in 2001.

It works the same way for centuries and decades. The first century was AD 1 to AD 100, the second was AD 101 to AD 200, and so. Following it all the way up to our time you get that the twentieth century covers 1901 to 2000. So I was really lying before when I said that the twentieth century was the same as the 1900s. Each have one year that is not in the other: the 1900s have 1900 which is in nineteenth century, and the twentieth century has 2000 which is not in the 1900s.

Similarly, to come back to this coming new years eve: the 2010s do indeed end when we leave 2019 – but the second decade of our century actually keeps going for another year until the end of 2020. So it’s the end of the decade but it also isn’t because “this decade” has two possible meanings.

Jesus!

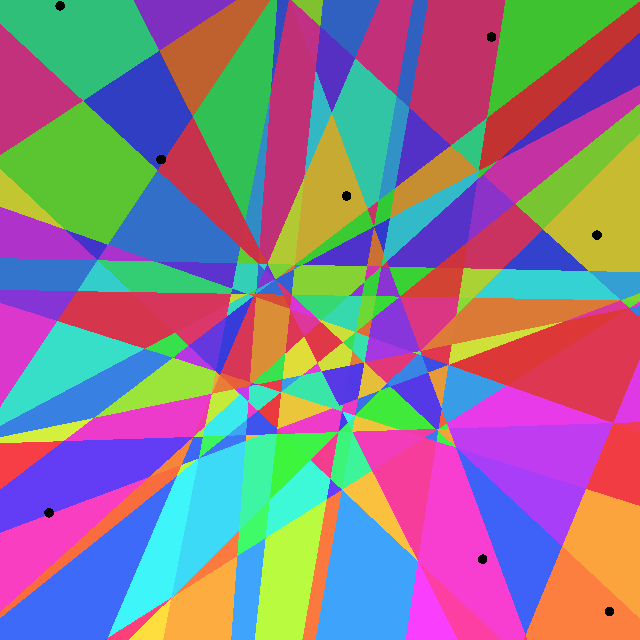

Let’s take a quick detour and talk about what AD means. We usually leave out the “AD” when we talk about modern years, nobody calls it 2019 AD, but the AD is there implicitly. And it’s what all this confusion springs from.

AD means Anno Domini, literally “in the year of the lord”, the lord in question being Jesus. It’s really an exercise in religious history, one that goes back to the darkest middle ages, so maybe it’s not surprising if modern pedants like me stumble over the mathematics of it.

Already at this point, before we’ve even gone into where AD comes from, things become murky. Consider this. If 2019 means “2019 years since the birth of Jesus”, and we assume Jesus was born in December, how old would he turn in December 2019? Presumably 2019 should be the year he turns 2019 right? But if he was born in AD 1 his 1-year birthday would have been in AD 2 and then 2019 is the year of his 2018’th birthday. He would have had to be born in 1 BC for the years to match up. But 1 BC is expressly not a “year of the lord”. Either the years don’t match up or Jesus – the actual lord – lived, at least briefly, in a year that wasn’t “of the lord”.

The first person to count years Anno Domini was a monk, Dionysius the Humble. (Side note: imagine a person so humble that he stands out among monks as the humble one). Back then, in the early 500s, the most common way to indicate a year was by who was roman consul that year. The year Dionysius invented AD would have been known to him and everyone else at the time as “the year of the consulship of Probus Junior”. But he was working on a table of when Easter would fall for the following century and when you’re dealing with 50 or 100 years into the future how do you denote years? You don’t know who will be consul. Dionysius decided to instead count years “since the incarnation of our Lord Jesus Christ”. By this scheme his present year was 525. How did he decide that at that moment it had been 525 years since the “incarnation”? We don’t really know, though some people have theories.

Note that this means that when historians argue that Jesus was more likely born between 6 BC and 4 BC they’re not disagreeing with the bible, the bible doesn’t specify a particular year. It was Dionysius, much later, who decided the year so he’s the one they’re disagreeing with.

In any case Dionysius wasn’t clear in his writing on what “since the incarnation” actually means so we don’t know if he believed Jesus was born in 1 BC or AD 1. The question from before about Jesus’ age doesn’t have a clear answer, at least not based on how years are counted. Most assume he meant 1 BC so the years and Jesus’ birthdays match up but we’re really not sure.

Year Zero

Now let’s backtrack a little to where we were before this little historical diversion: the off-by-one error in how we count years. The year numbers (1900s) and the ordinals (twentieth century) are misaligned.

It actually gets worse when you bring BC into it.

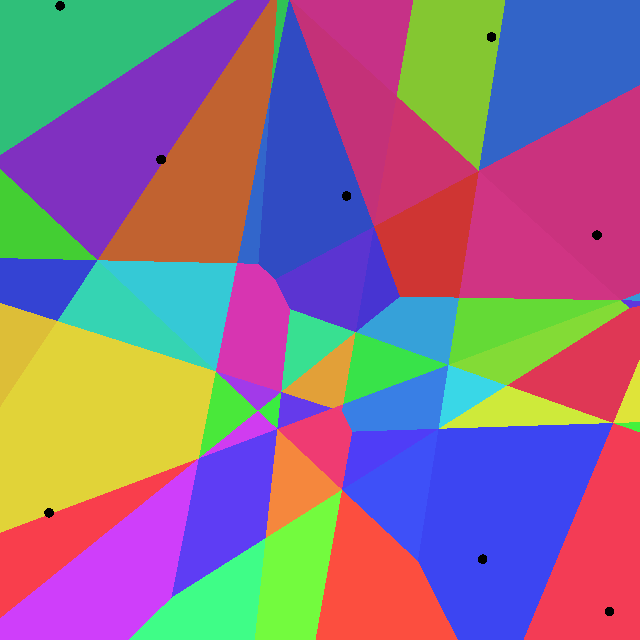

Here’s a little quiz. Take a moment to figure them out but don’t feel bad if you give up, they’re meant to be a little tricky. Also don’t worry about dates, assume that events all happened on the same calendar date.

- The Napoleonic wars started in 1803 and ended in 1815. How many years was this?

- Johann Sebastian Bach was born in 1685. Was that towards the beginning or the end of the 1600s? He died in 1750. How many years did he live?

- Julius Caesar was in a relationship with Cleopatra. He was born in 100 BC, she in 69 BC. What was their age difference?

- Mithridates the Great was born in 120 BC. Was that towards the beginning or the end of the 100s BC? He died in 63 BC. How many years did he live?

- Phraates V ruled Persia from 4 BC to AD 2. How many years did he rule?

Notice how the questions are basically the same but they get harder? I’m the one who made them up and I struggled to solve the last three ones myself, or at least struggled to convince myself that my answers were correct. But let’s go through the answers.

- The Napoleonic wars lasted 1815 – 1803 = 12 years.

- The year Bach was born, 1685, is towards the end of the 1600s and he lived 65 years: 15 years until 1700 and 50 years after, 65 in total.

- The age difference between Caesar and Cleopatra was 31 years because time counts backwards BC. 31 years on from 100 BC is 69 BC.

- Mithridates was born towards the end of the 100s BC. Again, in BC the years count backwards so 120 BC means there’s 20 years left of that century, not that 20 years have passed like it would in AD. He lived 57 years: 20 years until 100 BC and then, again because time counts backwards in BC, 37 years until 63 BC.

- Phraates ruled for 5 years. You’d think it should be 6: 4 years BC and 2 years AD, 6 in total – but there is no year 0, we skip directly from 1 BC to AD 1, so it’s really 5.

This highlights two issues with how we count years: it’s easy to work with modern years – we do it all the time – but everything gets turned upside down when you go to BC. You have to fight your normal understanding of what a year means – for instance the 20s of a century is now in the end, not the beginning. And you have to watch out especially for the missing year between 1 BC and AD 1.

There are models like astronomical time that insert a year 0 – or rather it renames 1 BC to 0 – and that helps a lot. But I don’t find it intuitive to work with negative years. For instance, I still have to turn off my normal intuition to get that -120 is before -115, not after.

I’m interested in ancient history and so I’ve stubbed my toe on this over and over again. I’ve also given some thought to what a more intuitive system might look like. What I’ve come up with, as a minimum, is: you would want to redefine BC 1 to be 0 AD. You would want years to always move forward; it’s more intuitive for the years’ names to move the same way as time: always forward. Finally you would want the years AD to stay unchanged since those are the ones we use every day, there’s no way to change those.

How about this?

Here is a system that has those properties.

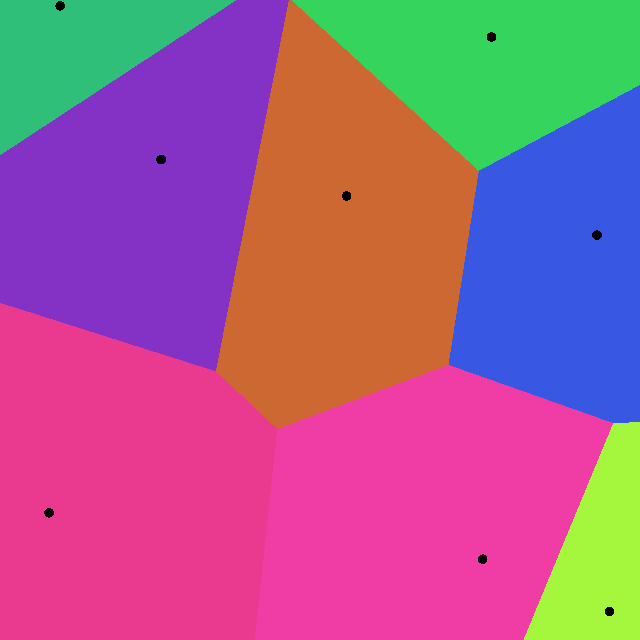

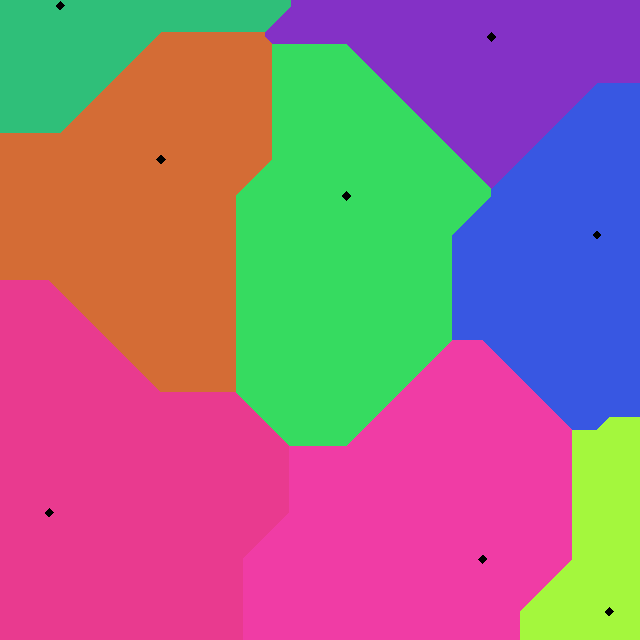

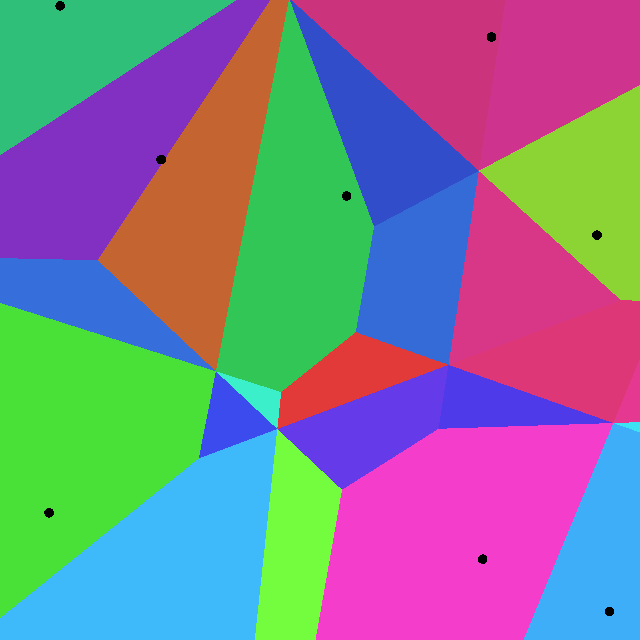

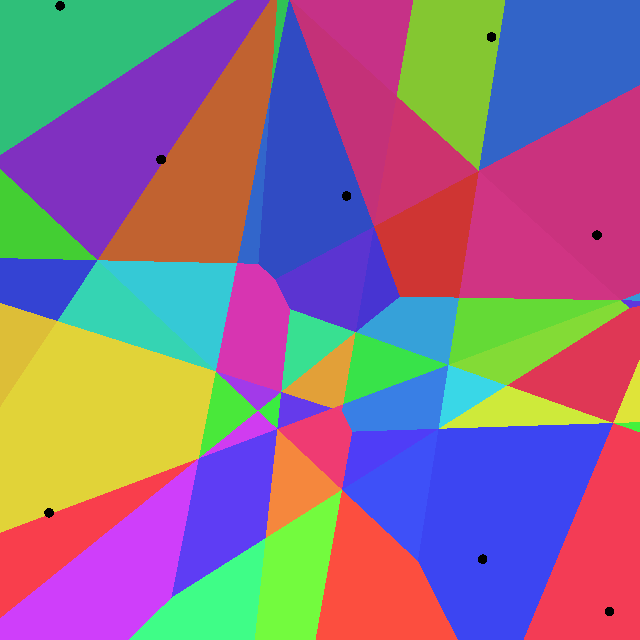

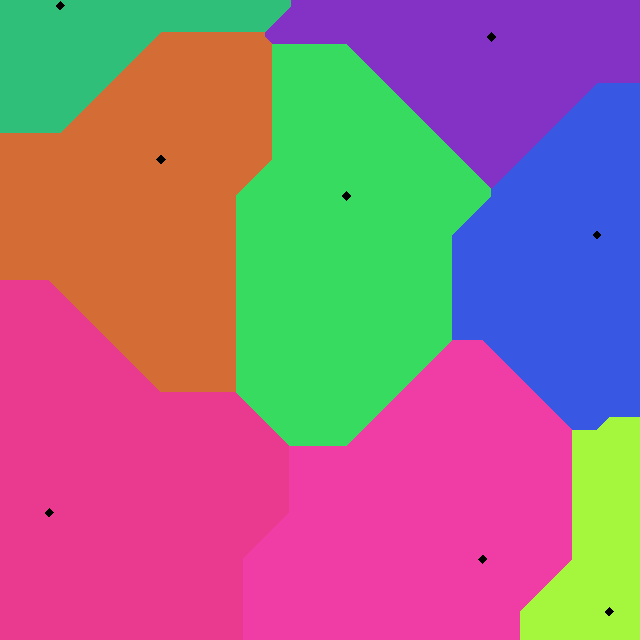

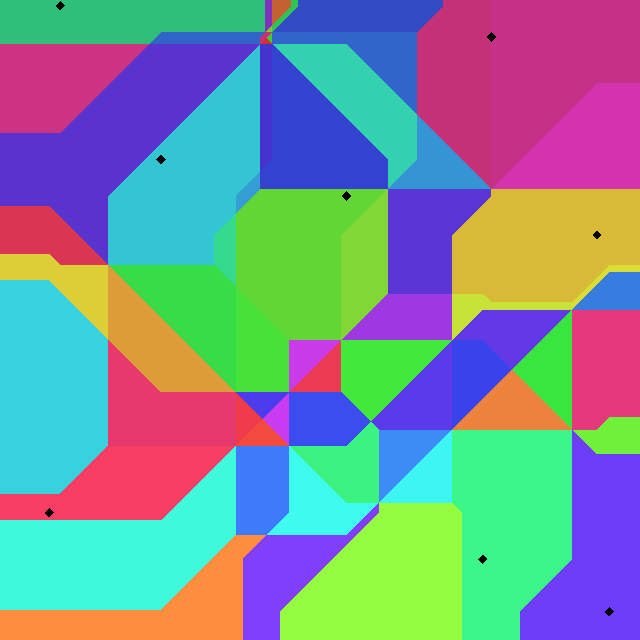

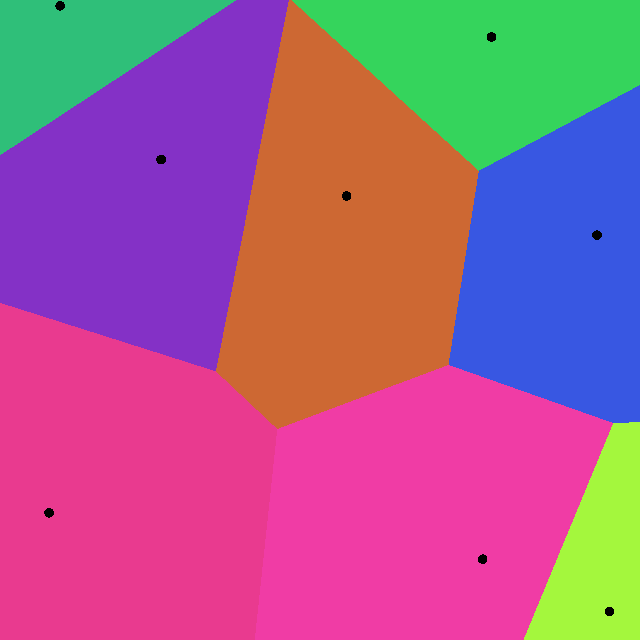

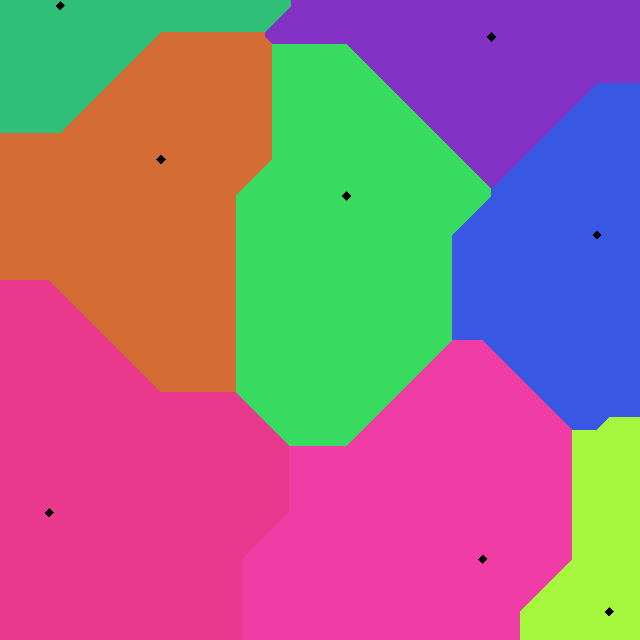

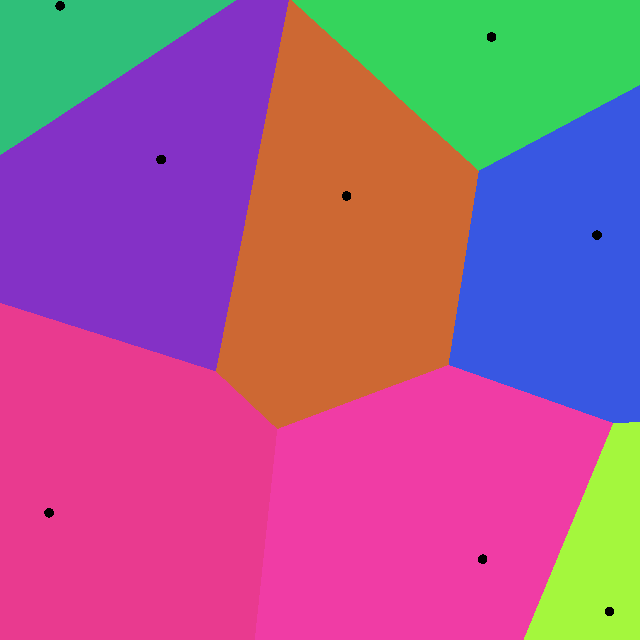

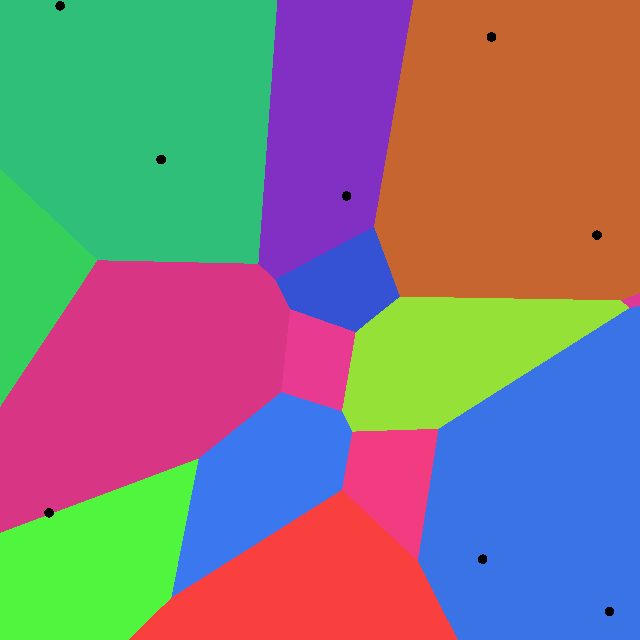

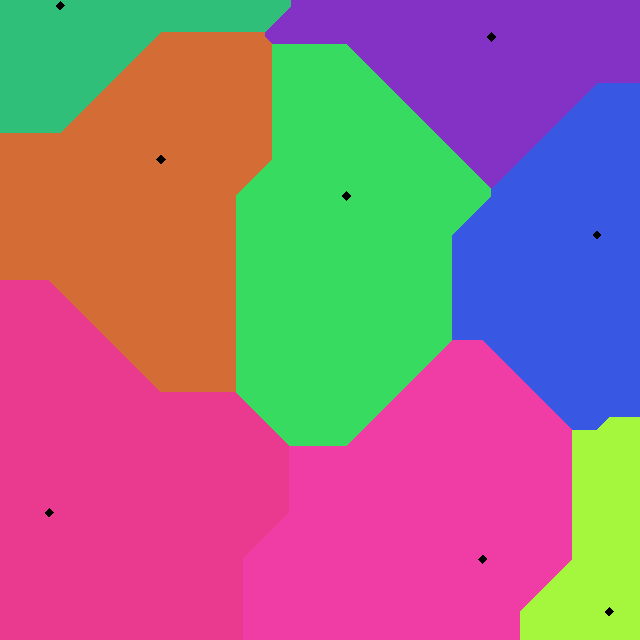

Like in astronomy you redefine BC 1 to be 0 AD. Other than that we leave AD unchanged. In BC you reverse how you count the years but only within each century. So the years count up both during BC and AD but the centuries BC still count backwards. Finally, to distinguish it from what we currently do, rather than write BC after or AD before you would write a B or A between the centuries and the years. This also makes it clear that the centuries and years within them are treated differently, sort of how we usually separate hours and minutes with a colon: 7:45 rather than 745.

What does that look like? Here are some examples:

- 525 AD is unchanged but can be written as 5A25 if you want to be really explicit.

- BC 1 is year zero AD and is written as 0 or, again if you want to be really explicit, 0A00.

- BC 2 is the last year of the first century BC so that’s 0B99. Since we’re always counting forwards the last year of any century is 99, like centuries AD.

- BC 3 is the year before 0B99 so 0B98. When going backwards in time we count the years backwards so the year before is one less.

- BC 34 is 0B67 because it’s 33 years before the end of the first century BC. Just like 1967 is 33 years before 2000.

- BC 134 is 1B67, just like BC 34 is 0B67. Only the years within the centuries are changed, the centuries stay the same (with a single exception).

There’s a simple general rule for converting the doesn’t require you to think, I’ll get to that in a second. First I’ll illustrate that this is indeed more intuitive by revisiting some questions from before but now written with this new system:

- Julius Caesar was in a relationship with Cleopatra. He was born in 0B01, she in 0B32. What was their age difference?

- Mithridates the Great was born in 1B81. Was that towards the beginning or the end of the 1B00s? He died in 0B38. How many years did he live?

- Phraates V ruled Persia from 0B97 to 2. How many years did he rule?

I would suggest that it’s now a lot closer to how you normally think about years. Also, the ADs are no longer off by one: the first century starts in year 0 and runs 100 years, to year 99. The twentieth starts in 1900 making the millennium new year 1999. If only they’d used this system Newman’s party wouldn’t have been late, and thus, not lame.

The numbers are written differently, at least in BC where they actually are different, so it’s clear which system is being used. Converting from BC is straightforward.

- Any year BC that ends in 01 becomes year zero in the next century. For instance, 201 BC (the year the Second Punic War ended) becomes 1B00 and 1001 BC (the year Zhou Mu Wang becomes king) becomes 9B00.

- Any other year you keep the century and subtract the year from 101. For instance for 183 BC (the year Scipio Africanus died), the century stays 1 and the year is 101 – 83 = 18. So that’s 1B18.

(Technically you don’t need the first rule because when the year is 01, 101 – 01 = 100 which you can interpret as adding one to the century and setting the year to 00.)

It’s maybe a little cumbersome for the person writing if they’re used to BC/AD but it’s the same kind of operation you already need to do to understand years BC in the first place. And I would suggest that the result is easier for the person reading and that hopefully more people read than write a given text. It’s the same kind of operation to go back to BC though I’ll leave that as an exercise for the reader.

A small but relevant point is: how do you pronounce these? I would suggest still saying BC and AD but between the century and year rather than after the whole number. So where you would say “one eighty-three b-c” for 183 BC, with the new system you would say “one b-c eighteen” for the equivalent year 1B18. For the first century BC, “thirty-four b-c” for 34 BC becomes “zero b-c sixty-seven” for the equivalent 0B67.

That’s it. I’m curious what anyone else thinks. If one day there was an opportunity to change how years are written – like there was for measurements during the French revolution – would this be a sensible model to switch to? If not, is there another model that’s better?